Let a thousand devices bloom

#5 : a singularity for interacting with tech → object evolution → handheld mri devices → fda approved mindcontrol → robots

This is a followup to the previous writeup on hardware. It was triggered by two things: 1) the release of the humane pin 2) me watching scavenger reign. The humane demo generated a bit of buzz and every twitter personality had to comment on it. The internet, being internet, made fun of the launch and ridiculed the “bodycam”. Personally I’m excited for new tech. Finally we’re seeing something that is *not* a clone of iPhone. The second thing, that almost dragged me into a philosophical thinking mode was scavenger reign. It’s both hard and easy to talk about it without spoilers. A very trippy animated series, with both visuals and storyline components executed with an artist’s touch. The style reminded me of works by Jean Giraud, and the story is something that the viewer has to figure out by themselves. Very much got me thinking how far can one stretch the narrative of tools and devices — highly recommended.

Feels like hardware is having a moment.

I’m not sure I’m ready for a future where everyone records everyone, but here we are. I don’t have too much optimism for privacy and decency, all rules will be broken, there’s just too much money involved. And it already has happened, OpenAI, Meta and everyone else just hoovered up all the data they could get their hands on from the internet. And as long as the cycle, more data = better service, continues, we will see more data being gathered by all means possible.

As with any tool it’s a double edged sword. The recent releases (humane, meta glases or rewind) are all potentially recording instruments that could (and I’m not sure why they won’t) record in all modalities available for it, video and/or audio and anything else that might seem valuable and where there’s a sensor.

One could argue that Apple, or another giant, could have done it all already (and maybe has). But they would face an infinite amount of questioning, as soon as they would try to implement an *open* information gathering scheme.

It’s interesting that the play is always the same. People would talk about valuing privacy, not wanting to let the big brother into their lives, but in the end they do. As with facebook or tiktok, the allure of an amazing service is too much for our ape brain. That was the point that Facebook made (sure we collect data and help advertisers target you, but you also get something in return, free stuff! who doesn’t like free stuff?) and what would fuel both more data gathering. I’m sure we’re going to see many more devices that deliver amazing services, but in turn become black holes for information capture.

Let a thousand device bloom.

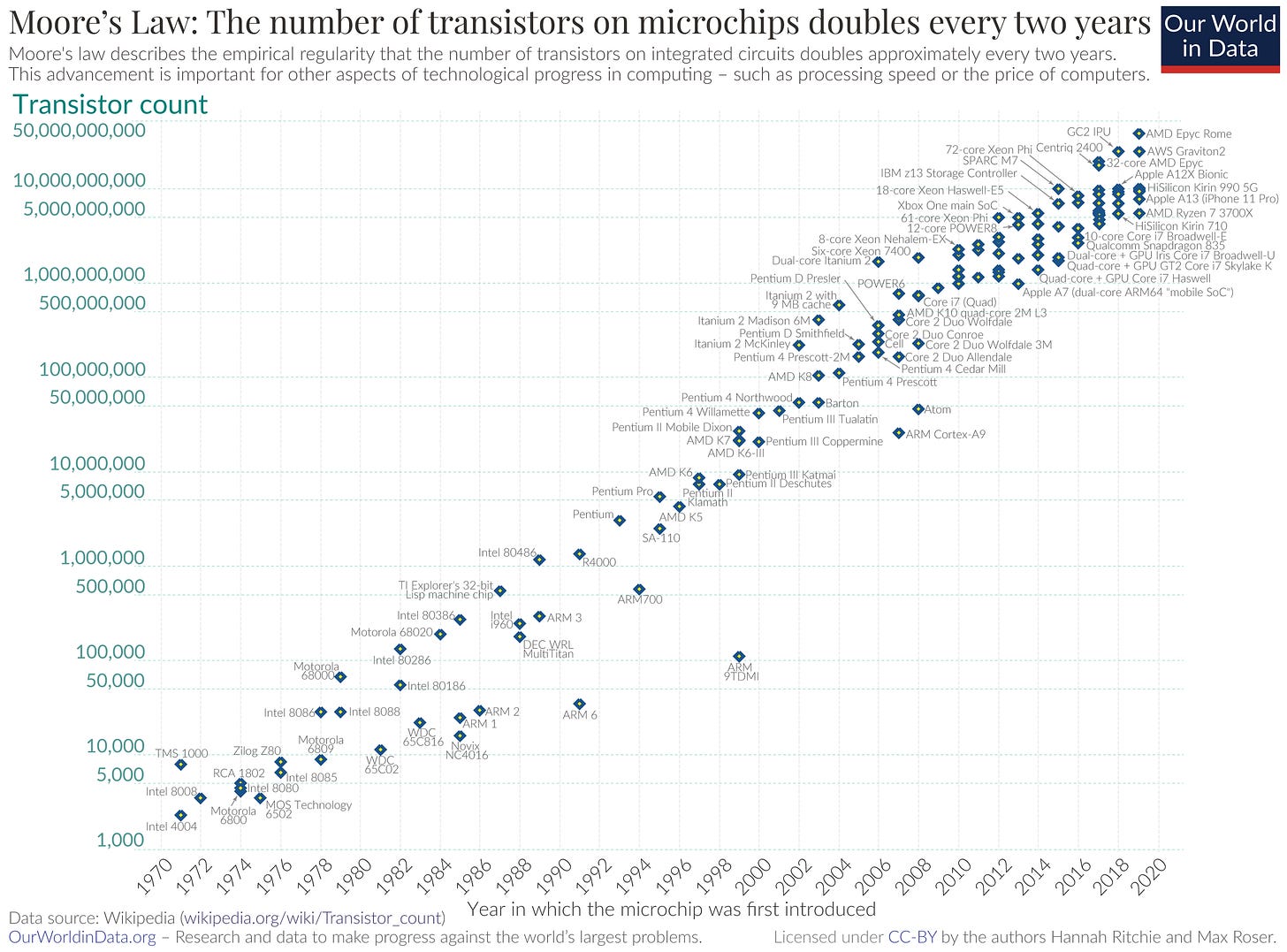

Batteries, microprocessors, screens, cameras, there’s quite a lot of innovation that happened in the last 20 years alone. There is a thing that is even more impressive that innovation in hardware, and that’s information processing. Software is eating the world becomes AI is eating software. All of the hardware innovation combined would be mute without the ability to actually process information inputs and deliver something useful from it.

Compared to 20-30 years ago we have supercomputers in our pockets with smartphones. What will the next 20-30 years bring? Will we have even more processing power in our phones? Other devices? Will we have intelligence access boost in the same way that we had computation access boost with microchips? Whatever it’s going to be, we are going to have much much more of it and many times cheaper. Moore’s law is alive and well.

There are a couple of reasons for many more affordances being created through real world interactions and new devices, beyond just pure Moore’s Law:

Lower cost to produce prototypes:

Producing and printing PCB can now be done cheaper and on demand, you can even abstract specific hardware with more generic off the shelf diy pieces as they get miniaturised and systems on a chip become massively available;

3D printing advancements are making it cheaper to build physical prototypes (including experimenting with different materials), you don’t need to order a big batch or have access to industrial 3D printers, everything is on-demand;

Writing specialised software is becoming both easier and less expensive with the help of LLMs and more powerful systems that support major ecosystems (python/linux/etc), you don’t need previous experience with obscure hardware;

Innovation is (also) moving to hardware:

Capital will go into areas beyond software (e.g. saas world get eaten away by AI);

More and more interesting data is a massive enabler for AI:

The little (big) dirty secret of AI is that it requires data. By all accounts the internet is not enough and lately OpenAI is literally begging other people for data. They promise to do OCR (optical character recognition) or ASR (automatic speech recognition) or both, they can make it private or public, they will clean up the data for you and I assume they will pay money if it comes to it (although they don’t mention it).

At the end, this cambrian explosion will be absorbed by the inevitable evolution of devices that we find ourselves using.

Object evolution: economies of scale, jobs to be done and aesthetics.

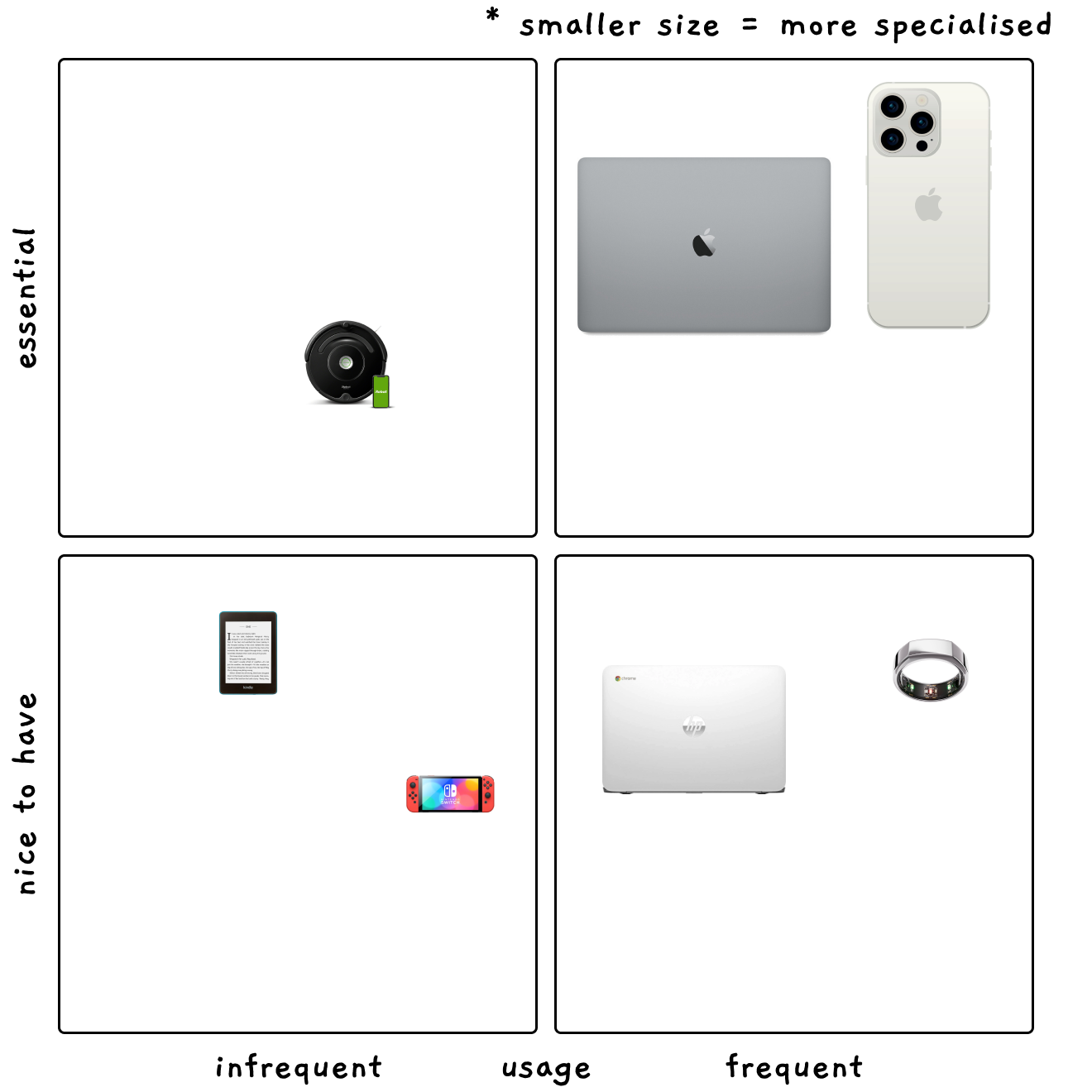

Independent of how much you try to keep a minimalistic, device-free lifestyle, you will inevitably invite multiple connected gadget into your home. I’ve tried to take an inventory of the various objects that we are using:

2x working macbooks, 2x working small laptops, 2 desktops, 2x Oura rings, several smartphones (multiple iPhones, Samsung, etc), nintendo switch, connected scales, apple watch, 2 kindle readers, a roomba, the list goes on. I stopped counting after remembering that even our washing machine can now be accessed from a phone. The list is long both, because you tend to have a lot of stuff with a family of 4, as well as the decreased cost of making any appliance “smart”.

Even though there’s some functional distinction, health tracking, communication, work, there’s also convergence present — when a device starts delivering more and more functionality. The phone being a clear example. Do you remember the last time you used a map when driving somewhere (or switched on a Garmin or a similar dedicated navigator)?

During the last 20 years there’s also a push towards creating technology that is more ambient and less obtrusive. The human brain has a finite capacity for interacting with all of those objects, whether it's remembering to charge them, checking for updates, or general maintenance and sometimes even usage. This limitation suggests that even as we experience a 'cambrian explosion' of startups flooding the market with innovative devices, there is an inevitable movement towards convergence.

This convergence could manifest in multifunctional devices that consolidate several needs into one, or in more integrated systems where devices communicate and collaborate, reducing the need for human interaction.

The great convergence has began and we don’t know when it will end, but we know where it’s going!

Mr Spock is ready to trade in his tricorder.

A cheaper way of collecting data and a new way of analysing it with machine learning means that we are on the brink of unlocking a much much better way of understanding and interacting with our surroundings. The blending of technology into everyday lives will move beyond iPhone. It seems we are very close to getting an upgrade in our mental and physical health as well as, believe it or not, automating laundry!

Health

One of the more magical experiences in the last couple of years with a device and something that I haven’t expected was with the smart oura ring. I think it’s mostly due to a) solid engineering — the battery is good, it just works and you don’t need to maintain it too much b) all of the interfacing is done through the phone. That means that I’m interacting with it but really I’m just using my phone, less friction for me, more value that the oura ring is delivering. As the sensors, batteries and electronics become more and more minituarised I think we will see more and more devices that help us understand, maintain and improve what’s happneing inside our bodies. There are already several concepts that I’m personally very excited about:

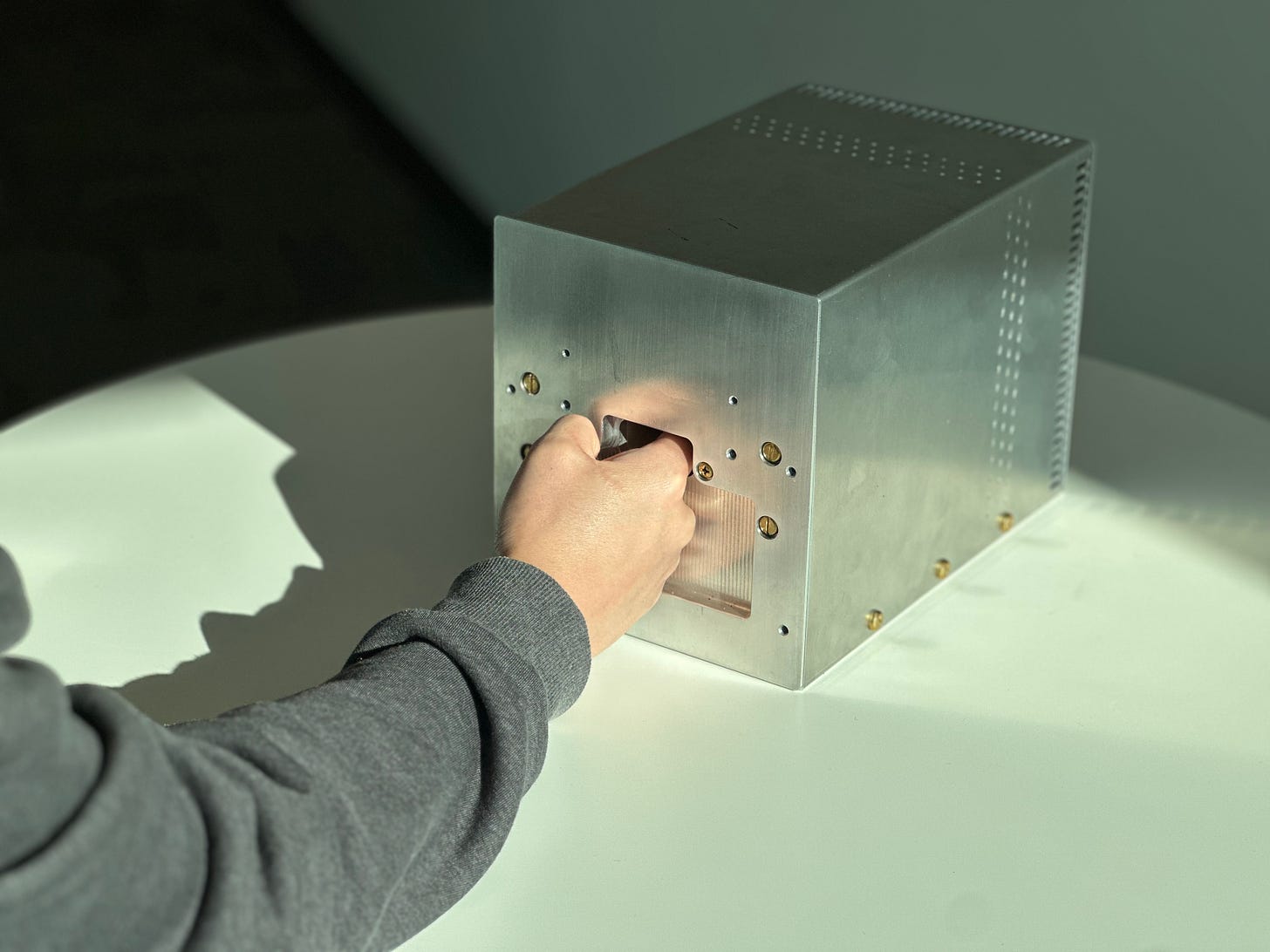

Miniature “MRI” to (non-invasively) measure glucose:

“For the first time, we have demonstrated that we can directly measure glucose non-invasively using magnetic resonance spectroscopy”, measuring molecules with a shoe-sized box, this is something straight from science fiction.

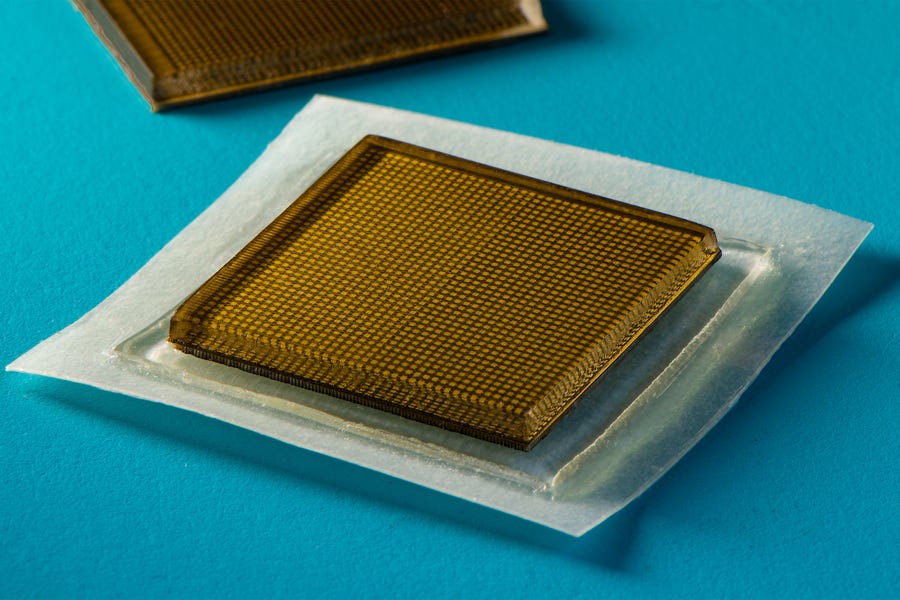

Sticker for continuous ultrasounds measurements:

“MIT engineers develop stickers that can see inside the body”, pretty much as the title goes, turns out by combining ML and piezoelements, gives a continuous ultrasound imaging of internal organs. It’s 3mm thick and works for 2 days non stop, potentially wirelessly.

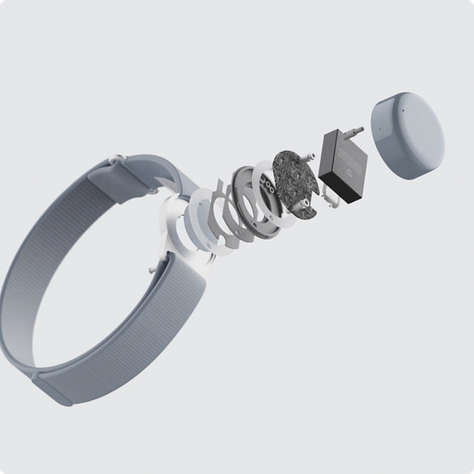

From left to right: Modular health bracelet design, brain-computer interface from Neuralink, a dog health measurement collar.

I actually haven’t realised that FDA approved the neuralink device, which is amazing news (even if we don’t get bladerunner-like neural interfaces… yet) and hopefully will allow for more affordable critical brain related treatments

We had a glimpse of both the scale and interest of that with Theranos, which I think gave healthtech a bad aftertaste, it was a) too early b) a scam. Hopefully this time we’ll get a better version of both.

Ambient technology in our spaces.

Environment:

There’s no reason the places we live and work can’t be magical. All the smart speakers, sensors and “smart home” concepts, that frankly were quite dumb, might fulfil the promise of a space that does and feel science fiction.

When I first seen Tonari launch their wall-sized, always on, low latency “portal” screen, I instantly wanted to get it for our meeting rooms. I probably just want that magical feeling of having a window to another place anywhere in the world. And also I think the less friction there is to communicate the better. “Can you see my screen?” is a little stab in how fluent the meeting goes.

Autonomous robots:

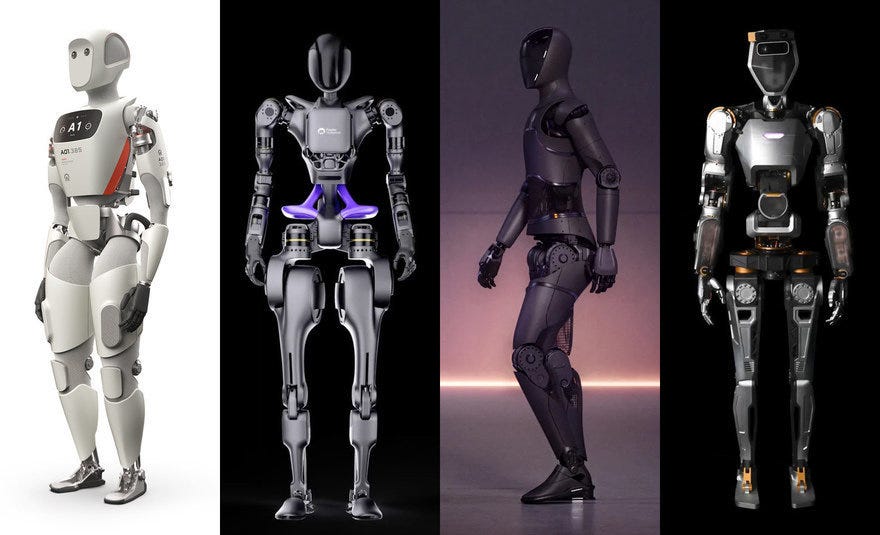

Forget autonomous driving cars, the next frontier is autonomous human like robots. Not clear if there’s any technical blocker to actually delivering a working humanoid robot to the market. But seems like in the last couple of years we are seeing multiple companies achieve some remarkable results, robots can (finally!) fold laundry, move objects around, convincingly do yoga poses and even do backflips. It will be some time before they are going to be cheap enough to afford in a home, but how much?

Here is a nice roundup on how industry is already using robots (with a focus on Amazon) and the next frontier — humanoid general purpose robots.

Left to right: Apptronik Apollo, Fourier Intelligence GR-1, Figure, Sanctuary AI Phoenix

Below: Boston Dynamics

Final thoughts.

It took us around 100 years to go from dystopia robot in the underground (Metropolis) to the Namaste tesla robot (good vibes anyone?). And while this all looks like science fiction today, it will for sure take less than a 100 years to get most of it, if not more.